Virtualization: Past, Present, and Future

Virtualization has quietly transformed the backbone of modern computing. It powers the cloud, streamlines data centers, and fuels digital transformation across industries. But it didn’t start this way. Its story began decades ago, in the era of mainframes and punch cards.

The History of Virtualization

In the 1960s, IBM engineers faced a challenge: how to run multiple programs efficiently on a single, expensive mainframe. Their solution was the virtual machine (VM); an isolated environment that acted as its own computer. This innovation let one physical machine host many virtual ones, each running independently.

Fast-forward to the late 1990s. Data centers were overflowing with underutilized x86 servers. VMware’s arrival changed that. By introducing x86 virtualization, they allowed multiple servers to run on one box, dramatically cutting costs and improving flexibility. Soon after, Microsoft (Hyper-V), Citrix, and open-source solutions like KVM and Xen followed, fueling the modern data center revolution.

The Technology Today

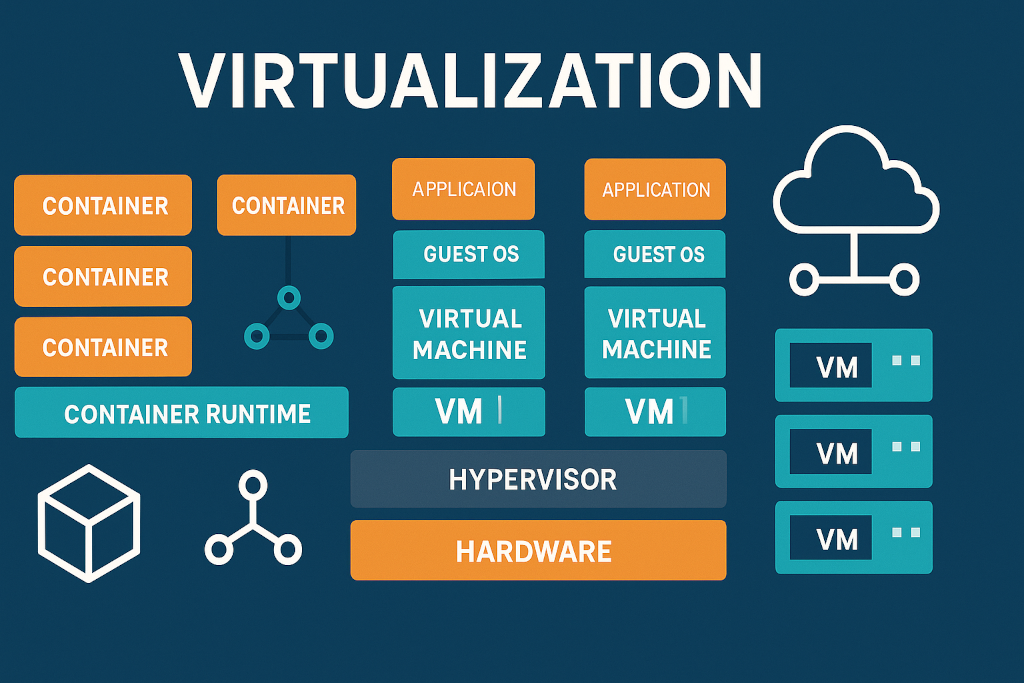

Modern virtualization has become the invisible infrastructure behind nearly everything digital. At its core lies the hypervisor, a software layer that abstracts physical hardware and allocates virtual resources to each guest system.

From hardware at the bottom to the cloud at the top, virtualization stacks create flexible, efficient environments that can host everything from single workloads to entire cloud ecosystems.

The Rise of Containerization

While traditional VMs virtualize hardware, containers virtualize the operating system itself. This means they share the host OS kernel, resulting in far lighter, faster, and more portable workloads.

Containers became mainstream thanks to Docker, and their scalability and portability were supercharged with Kubernetes, an orchestration system that automates deployment, scaling, and management of containerized applications.

Containers vs Virtual Machines

- Virtual Machines: Each VM runs its own OS, consuming more resources but offering stronger isolation — ideal for legacy or security-sensitive workloads.

- Containers: Share the host OS, start in seconds, and consume less memory — perfect for microservices, cloud-native apps, and CI/CD pipelines.

This shift toward containerization has allowed organizations to deploy applications faster, with less overhead, and scale globally in seconds.

Current Trends Shaping Virtualization

- Hybrid and Multi-Cloud Virtualization

Most organizations now run workloads across on-premises, private, and public cloud environments. Hypervisors like VMware vSphere, Microsoft Hyper-V, KVM, Proxmox VE, and Nutanix AHV form the virtualization backbone providing compute, storage, and network abstraction.

Above that, platforms such as VMware Cloud Foundation, Red Hat OpenShift, and Azure Arc unify management, automation, and policy enforcement across diverse environments.

They don’t virtualize hardware, they govern and orchestrate the layers beneath it, giving IT teams consistent visibility and control across hybrid ecosystems.

- AI and Automation

AI-driven virtualization platforms now predict workloads, optimize performance, and automatically allocate compute or storage where it’s needed most. - Security, Segmentation, and Isolation

Virtualization has become a core part of Zero-Trust architecture. Features like micro-segmentation (VMware NSX, Nutanix Flow), secure enclaves, and hardware-based trusted execution environments enhance workload isolation. This ensures that even if one VM or container is compromised, others remain unaffected, a critical layer in modern cyber resilience. - Edge and Distributed Virtualization

With the growth of IoT and edge computing, workloads are increasingly deployed closer to where data is generated. Lightweight hypervisors and container engines like K3s, Harvester, and Proxmox Edge allow for running virtual machines and containers efficiently in remote or resource-constrained environments. This shift is essential for industries like logistics, manufacturing, and transportation where latency, uptime, and local processing matter as much as central compute power. - Open and Vendor-Neutral Virtualization

There’s a growing shift toward open ecosystems and vendor independence.

Solutions like Proxmox, Nutanix, and KVM-based infrastructures give organizations flexibility without locking them into proprietary licensing or hardware.

This trend reflects a broader desire for transparency, customization, and cost control — aligning with open-source and hybrid philosophies that value freedom over closed architectures.

The Future: Intelligent, Distributed, and Sustainable

The next phase of virtualization will focus on intelligence and autonomy:

- Micro-VMs and Serverless Computing: Tiny, ephemeral instances that start and stop in milliseconds for event-based workloads.

- Self-Optimizing Virtualization: Systems that learn, adapt, and self-heal with minimal human intervention.

- Sustainable Cloud Operations: Dynamic workload migration to greener data centers based on energy consumption patterns.

- AI-Enhanced Orchestration: Predictive scaling and real-time remediation to prevent downtime before it occurs.

How Tecative Can Help

At Tecative, we understand that every organization’s virtualization journey is unique.

Whether you’re just starting to consolidate your infrastructure, moving workloads to the cloud, or embracing containerized applications, we can help you:

- Define your needs: Assess your existing workloads and infrastructure to determine the best virtualization strategy.

- Deploy the right solutions: From VMware and Hyper-V to Kubernetes, Docker, or hybrid cloud environments, Tecative ensures your implementation is efficient, secure, and scalable.

- Optimize for the future: We help you automate, monitor, and fine-tune your virtual environments for peak performance and cost efficiency.

With Tecative, virtualization isn’t just a technology; it’s a path to smarter, leaner, and more resilient IT operations.

Comments (0)

No comments yet. Be the first to comment!

Leave a Comment